MPI Test Suite Test Descriptions

Each of the collapsible regions below corresponds to the test groups displayed

in the MPI Test Suite Results table. Click the name of a test group to

see the individual tests which comprise it. Some tests appear in multiple

groups.

Back to Results Table

Topology

The Network topology tests are designed to examine the operation of specific communication patterns such as Cartesian and Graph topology.

MPI_Cart_create basic

This test creates a cartesian mesh and tests for errors.

MPI_Cartdim_get zero-dim

Check that the MPI implementation properly handles zero-dimensional Cartesian communicators - the original standard implies that these should be consistent with higher dimensional topologies and therefore should work with any MPI implementation. MPI 2.1 made this requirement explicit.

MPI_Cart_map basic

This test creates a cartesian map and tests for errors.

MPI_Cart_shift basic

This test exercises MPI_Cart_shift().

MPI_Cart_sub basic

This test exercises MPI_Cart_sub().

MPI_Dims_create nodes

This test uses multiple variations for the arguments of MPI_Dims_create() and tests whether the product of ndims (number of dimensions) and the returned dimensions are equal to nnodes (number of nodes) thereby determining if the decomposition is correct. The test also checks for compliance with the MPI_- standard section 6.5 regarding decomposition with increasing dimensions. The test considers dimensions 2-4.

MPI_Dims_create special 2d/4d

This test is similar to topo/dims1 but only exercises dimensions 2 and 4 including test cases whether all dimensions are specified.

MPI_Dims_create special 3d/4d

This test is similar to topo/dims1 but only considers special cases using dimensions 3 and 4.

MPI_Dist_graph_create

This test excercises MPI_Dist_graph_create() and MPI_Dist_graph_adjacent().

MPI_Graph_create null/dup

Create a communicator with a graph that contains null edges and one that contains duplicate edges.

MPI_Graph_create zero procs

Create a communicator with a graph that contains no processes.

MPI_Graph_map basic

Simple test of MPI_Graph_map().

MPI_Topo_test datatypes

Check that topo test returns the correct type, including MPI_UNDEFINED.

MPI_Topo_test dgraph

Specify a distributed graph of a bidirectional ring of the MPI_COMM_WORLD communicator. Thus each node in the graph has a left and right neighbor.

MPI_Topo_test dup

Create a cartesian topology, get its characteristics, then dup it and check that the new communicator has the same properties.

Neighborhood collectives

A basic test for the 10 (5 patterns x {blocking,non-blocking}) MPI-3 neighborhood collective routines.

Basic Functionality

This group features tests that emphasize basic MPI functionality such as initializing MPI and retrieving its rank.

Basic send/recv

This is a basic test of the send/receive with a barrier using MPI_Send() and MPI_Recv().

Const cast

This test is designed to test the new MPI-3.0 const cast applied to a "const *" buffer pointer.

Elapsed walltime

This test measures how accurately MPI can measure 1 second.

Generalized request basic

Simple test of generalized requests. This simple code allows us to check that requests can be created, tested, and waited on in the case where the request is complete before the wait is called.

Init arguments

In MPI-1.1, it is explicitly stated that an implementation is allowed to require that the arguments argc and argv passed by an application to MPI_Init in C be the same arguments passed into the application as the arguments to main. In MPI-2 implementations are not allowed to impose this requirement. Conforming implementations of MPI allow applications to pass NULL for both the argc and argv arguments of MPI_Init(). This test prints the result of the error status of MPI_Init(). If the test completes without error, it reports 'No errors.'

Input queuing

Test of a large number of MPI datatype messages with no preposted receive so that an MPI implementation may have to queue up messages on the sending side. Uses MPI_Type_Create_indexed_block to create the send datatype and receives data as ints.

Intracomm communicator

This program calls MPI_Reduce with all Intracomm Communicators.

Isend and Request_free

Test multiple non-blocking send routines with MPI_Request_Free. Creates non-blocking messages with MPI_Isend(), MPI_Ibsend(), MPI_Issend(), and MPI_Irsend() then frees each request.

Large send/recv

This test sends the length of a message, followed by the message body.

MPI_ANY_{SOURCE,TAG}

This test uses MPI_ANY_SOURCE and MPI_ANY_TAG in repeated MPI_Irecv() calls. One implementation delivered incorrect data when using both ANY_SOURCE and ANY_TAG.

MPI_Abort() return exit

This program calls MPI_Abort and confirms that the exit status in the call is returned to the invoking environment.

MPI Attribues test

This is a test of creating and inserting attribues in different orders to ensure that the list management code handles all cases.

MPI_BOTTOM basic

Simple test using MPI_BOTTOM for MPI_Send() and MPI_Recv().

MPI_Bsend alignment

Simple test for MPI_Bsend() that sends and receives multiple messages with message sizes chosen to expose alignment problems.

MPI_Bsend buffer alignment

Test bsend with a buffer with alignment between 1 and 7 bytes.

MPI_Bsend detach

Test the handling of MPI_Bsend() operations when a detach occurs between MPI_Bsend() and MPI_Recv(). Uses busy wait to ensure detach occurs between MPI routines and tests with a selection of communicators.

MPI_Bsend() intercomm

Simple test for MPI_Bsend() that creates an intercommunicator with two evenly sized groups and then repeatedly sends and receives messages between groups.

MPI_Bsend ordered

Test bsend message handling where different messages are received in different orders.

MPI_Bsend repeat

Simple test for MPI_Bsend() that repeatedly sends and receives messages.

MPI_Bsend with init and start

Simple test for MPI_Bsend() that uses MPI_Bsend_init() to create a persistent communication request and then repeatedly sends and receives messages. Includes tests using MPI_Start() and MPI_Startall().

MPI_Cancel completed sends

Calls MPI_Isend(), forces it to complete with a barrier, calls MPI_Cancel(), then checks cancel status. Such a cancel operation should silently fail. This test returns a failure status if the cancel succeeds.

MPI_Cancel sends

Test of various send cancel calls. Sends messages with MPI_Isend(), MPI_Ibsend(), MPI_Irsend(), and MPI_Issend() and then immediately cancels them. Then verifies message was cancelled and was not received by destination process.

MPI_Finalized() test

This tests when MPI_Finalized() will work correctly if MPI_INit() was not called. This behaviour is not defined by the MPI standard, therefore this test is not garanteed.

MPI_Get_library_version test

MPI-3.0 Test returns MPI library version.

MPI_Get_version() test

This MPI_3.0 test prints the MPI version. If running a version of MPI < 3.0, it simply prints "No Errors".

MPI_Ibsend repeat

Simple test for MPI_Ibsend() that repeatedly sends and receives messages.

MPI_{Is,Query}_thread() test

This test examines the MPI_Is_thread() and MPI_Query_thread() call after being initilized using MPI_Init_thread().

MPI_Isend root cancel

This test case has the root send a non-blocking synchronous message to itself, cancels it, then attempts to read it.

MPI_Isend root

This is a simple test case of sending a non-blocking message to the root process. Includes test with a null pointer. This test uses a single process.

MPI_Isend root probe

This is a simple test case of the root sending a message to itself and probing this message.

MPI_Mprobe() series

This tests MPI_Mprobe() using a series of tests. Includes tests with send and Mprobe+Mrecv, send and Mprobe+Imrecv, send and Improbe+Mrecv, send and Improbe+Irecv, Mprobe+Mrecv with MPI_PROC_NULL, Mprobe+Imrecv with MPI_PROC_NULL, Improbe+Mrecv with MPI_PROC_NULL, Improbe+Imrecv, and test to verify MPI_Message_c2f() and MPI_Message_f2c() are present.

MPI_Probe() null source

This program checks that MPI_Iprobe() and MPI_Probe() correctly handle a source of MPI_PROC_NULL.

MPI_Probe() unexpected

This program verifies that MPI_Probe() is operating properly in the face of unexpected messages arriving after MPI_Probe() has been called. This program may hang if MPI_Probe() does not return when the message finally arrives. Tested with a variety of message sizes and number of messages.

MPI_Request_get_status

Test MPI_Request_get_status(). Sends a message with MPI_Ssend() and creates receives request with MPI_Irecv(). Verifies Request_get_status does not return correct values prior to MPI_Wait() and returns correct values afterwards. The test also checks that MPI_REQUEST_NULL and MPI_STATUS_IGNORE work as arguments as required beginning with MPI-2.2.

MPI_Request many irecv

This test issues many non-blocking receives followed by many blocking MPI_Send() calls, then issues an MPI_Wait() on all pending receives using multiple processes and increasing array sizes. This test may fail due to bugs in the handling of request completions or in queue operations.

MPI_{Send,Receive} basic

This is a simple test using MPI_Send() and MPI_Recv(), MPI_Sendrecv(), and MPI_Sendrecv_replace() to send messages between two processes using a selection of communicators and datatypes and increasing array sizes.

MPI_{Send,Receive} large backoff

Head to head MPI_Send() and MPI_Recv() to test backoff in device when large messages are being transferred. Includes a test that has one process sleep prior to calling send and recv.

MPI_{Send,Receive} vector

This is a simple test of MPI_Send() and MPI_Recv() using MPI_Type_vector() to create datatypes with an increasing number of blocks.

MPI_Send intercomm

Simple test of intercommunicator send and receive using a selection of intercommunicators.

MPI_Status large count

This test manipulates an MPI status object using MPI_Status_set_elements_x() with various large count values and verifying MPI_Get_elements_x() and MPI_Test_cancelled() produce the correct values.

MPI_Test pt2pt

This test program checks that the point-to-point completion routines can be applied to an inactive persistent request, as required by the MPI-1 standard. See section 3.7.3. It is allowed to call MPI TEST with a null or inactive request argument. In such a case the operation returns with flag = true and empty status. Tests both persistent send and persistent receive requests.

MPI_Waitany basic

This is a simple test of MPI_Waitany().

MPI_Waitany comprehensive

This program checks that the various MPI_Test and MPI_Wait routines allow both null requests and in the multiple completion cases, empty lists of requests.

MPI_Wtime() test

This program tests the ability of mpiexec to timeout a process after no more than 3 minutes. By default, it will run for 30 secs.

Many send/cancel order

Test of various receive cancel calls. Creates multiple receive requests then cancels three requests in a more interesting order to ensure the queue operation works properly. The other request receives the message.

Message patterns

This test sends/receives a number of messages in different patterns to make sure that all messages are received in the order they are sent. Two processes are used in the test.

Persistent send/cancel

Test cancelling persistent send calls. Tests various persistent send calls including MPI_Send_init(), MPI_Bsend_init(), MPI_Rsend_init(), and MPI_Ssend_init() followed by calls to MPI_Cancel().

Ping flood

This test sends a large number of messages in a loop in the source process, and receives a large number of messages in a loop in the destination process using a selection of communicators, datatypes, and array sizes.

Preposted receive

Test root sending to self with a preposted receive for a selection of datatypes and increasing array sizes. Includes tests for MPI_Send(), MPI_Ssend(), and MPI_Rsend().

Race condition

Repeatedly sends messages to the root from all other processes. Run this test with 8 processes. This test was submitted as a result of problems seen with the ch3:shm device on a Solaris system. The symptom is that the test hangs; this is due to losing a message, probably due to a race condition in a message-queue update.

Sendrecv from/to

This test uses MPI_Sendrecv() sent from and to rank=0. Includes test for MPI_Sendrecv_replace().

Simple thread finalize

The test here is a simple one that Finalize exits, so the only action is to write no error.

Simple thread initialize

The test initializes a thread, then calls MPI_Finalize() and prints "No errors".

Communicator Testing

This group features tests that emphasize MPI calls that create, manipulate, and delete MPI Communicators.

Comm_create_group excl 4 rank

This test using 4 processes creates a group with the even processes using MPI_Group_excl() and uses this group to create a communicator. Then both the communicator and group are freed.

Comm_create_group excl 8 rank

This test using 8 processes creates a group with the even processes using MPI_Group_excl() and uses this group to create a communicator. Then both the communicator and group are freed.

Comm_create_group incl 2 rank

This test using 2 processes creates a group with ranks less than size/2 using MPI_Group_range_incl() and uses this group to create a communicator. Then both the communicator and group are freed.

Comm_create_group incl 4 rank

This test using 4 processes creates a group with ranks less than size/2 using MPI_Group_range_incl() and uses this group to create a communicator. Then both the communicator and group are freed.

Comm_create_group incl 8 rank

This test using 8 processes creates a group with ranks less than size/2 using MPI_Group_range_incl() and uses this group to create a communicator. Then both the communicator and group are freed.

Comm_create_group random 2 rank

This test using 2 processes creates and frees groups by randomly adding processes to a group, then creating a communicator with the group.

Comm_create_group random 4 rank

This test using 4 processes creates and frees groups by randomly adding processes to a group, then creating a communicator with the group.

Comm_create_group random 8 rank

This test using 8 processes creates and frees groups by randomly adding processes to a group, then creating a communicator with the group.

Comm_create group tests

Simple test that gets the group of an intercommunicator using MPI_Group_rank() and MPI_Group_size() using a selection of intercommunicators.

Comm_create intercommunicators

This program tests MPI_Comm_create() using a selection of intercommunicators. Creates a new communicator from an intercommunicator, duplicates the communicator, and verifies that it works. Includes test with one side of intercommunicator being set with MPI_GROUP_EMPTY.

Comm creation comprehensive

Check that Communicators can be created from various subsets of the processes in the communicator. Uses MPI_Comm_group(), MPI_Group_range_incl(), and MPI_Comm_dup() to create new communicators.

Comm_{dup,free} contexts

This program tests the allocation and deallocation of contexts by using MPI_Comm_dup() to create many communicators in batches and then freeing them in batches.

Comm_dup basic

This test exercises MPI_Comm_dup() by duplicating a communicator, checking basic properties, and communicating with this new communicator.

Comm_dup contexts

Check that communicators have separate contexts. We do this by setting up non-blocking receives on two communicators and then sending to them. If the contexts are different, tests on the unsatisfied communicator should indicate no available message. Tested using a selection of intercommunicators.

Comm_{get,set}_name basic

Simple test for MPI_Comm_get_name() using a selection of communicators.

Comm_idup 2 rank

Multiple tests using 2 processes that make rank 0 wait in a blocking receive until all other processes have called MPI_Comm_idup(), then call idup afterwards. Should ensure that idup doesn't deadlock. Includes a test using an intercommunicator.]

Comm_idup 4 rank

Multiple tests using 4 processes that make rank 0 wait in a blocking receive until all other processes have called MPI_Comm_idup(), then call idup afterwards. Should ensure that idup doesn't deadlock. Includes a test using an intercommunicator.

Comm_idup 9 rank

Multiple tests using 9 processes that make rank 0 wait in a blocking receive until all other processes have called MPI_Comm_idup(), then call idup afterwards. Should ensure that idup doesn't deadlock. Includes a test using an intercommunicator.]

Comm_idup multi

Simple test creating multiple communicators with MPI_Comm_idup.

Comm_idup overlap

Each pair of processes uses MPI_Comm_idup() to dup the communicator such that the dups are overlapping. If this were done with MPI_Comm_dup() this should deadlock.

Comm_split basic

Simple test for MPI_Comm_split().

Comm_split intercommunicators

This tests MPI_Comm_split() using a selection of intercommunicators. The split communicator is tested using simple send and receive routines.

Comm_split key order

This test ensures that MPI_Comm_split breaks ties in key values by using the original rank in the input communicator. This typically corresponds to the difference between using a stable sort or using an unstable sort. It checks all sizes from 1..comm_size(world)-1, so this test does not need to be run multiple times at process counts from a higher-level test driver.

Comm_split_type basic

Tests MPI_Comm_split_type() including a test using MPI_UNDEFINED.

Comm_with_info dup 2 rank

This test exercises MPI_Comm_dup_with_info() with 2 processes by setting the info for a communicator, duplicating it, and then testing the communicator.

Comm_with_info dup 4 rank

This test exercises MPI_Comm_dup_with_info() with 4 processes by setting the info for a communicator, duplicating it, and then testing the communicator.

Comm_with_info dup 9 rank

This test exercises MPI_Comm_dup_with_info() with 9 processes by setting the info for a communicator, duplicating it, and then testing the communicator.

Context split

This test uses MPI_Comm_split() to repeatedly create and free communicators. This check is intended to fail if there is a leak of context ids. This test needs to run longer than many tests because it tries to exhaust the number of context ids. The for loop uses 10000 iterations, which is adequate for MPICH (with only about 1k context ids available).

Intercomm_create basic

A simple test of MPI_Intercomm_create() that creates an intercommunicator and verifies that it works.

Intercomm_create many rank 2x2

Test for MPI_Intercomm_create() using at least 33 processes that exercises a loop bounds bug by creating and freeing two intercommunicators with two processes each.

Intercomm_merge

Test MPI_Intercomm_merge() using a selection of intercommunicators. Includes multiple tests with different choices for the high value.

Intercomm probe

Test MPI_Probe() with a selection of intercommunicators. Creates and intercommunicator, probes it, and then frees it.

MPI_Info_create basic

Simple test for MPI_Comm_{set,get}_info.

Multiple threads context dup

This test creates communicators concurrently in different threads.

Multiple threads context idup

This test creates communicators concurrently, non-blocking, in different threads.

Multiple threads dup leak

This test repeatedly duplicates and frees communicators with multiple threads concurrently to stress the multithreaded aspects of the context ID allocation code. Thanks to IBM for providing the original version of this test.

Simple thread comm dup

This is a simple test of threads in MPI with communicator duplication.

Simple thread comm idup

This is a simple test of threads in MPI with non-blocking communicator duplication.

Thread Group creation

Every thread paticipates in a distinct MPI_Comm_create group, distinguished by its thread-id (used as the tag). Threads on even ranks join an even comm and threads on odd ranks join the odd comm.

Threaded group

In this test a number of threads are created with a distinct MPI communicator (or comm) group distinguished by its thread-id (used as a tag). Threads on even ranks join an even comm and threads on odd ranks join the odd comm.

Error Processing

This group features tests of MPI error processing.

Error Handling

Reports the default action taken on an error. It also reports if error handling can be changed to "returns", and if so, if this functions properly.

File IO error handlers

This test exercises MPI I/O and MPI error handling techniques.

MPI_Abort() return exit

This program calls MPI_Abort and confirms that the exit status in the call is returned to the invoking environment.

MPI_Add_error_class basic

Create NCLASSES new classes, each with 5 codes (160 total).

MPI_Comm_errhandler basic

Test comm_{set,call}_errhandle.

MPI_Error_string basic

Test that prints out MPI error codes from 0-53.

MPI_Error_string error class

Simple test where an MPI error class is created, and an error string introduced for that string.

User error handling 1 rank

Ensure that setting a user-defined error handler on predefined communicators does not cause a problem at finalize time. Regression test for former issue. Runs on 1 rank.

User error handling 2 rank

Ensure that setting a user-defined error handler on predefined communicators does not cause a problem at finalize time. Regression test for former issue. Runs on 2 ranks.

UTK Test Suite

This group features the test suite developed at the University of Tennesss Knoxville for MPI-2.2 and earlier specifications. Though techically not a functional group, it was retained to allow comparison with the previous benchmark suite.

Alloc_mem

Simple check to see if MPI_Alloc_mem() is supported.

Assignment constants

Test for Named Constants supported in MPI-1.0 and higher. The test is a Perl script that constructs a small seperate main program in either C or FORTRAN for each constant. The constants for this test are used to assign a value to a const integer type in C and an integer type in Fortran. This test is the de facto test for any constant recognized by the compiler. NOTE: The constants used in this test are tested against both C and FORTRAN compilers. Some of the constants are optional and may not be supported by the MPI implementation. Failure to verify these constants does not necessarily constitute failure of the MPI implementation to satisfy the MPI specifications. ISSUE: This test may timeout if separate program executions initialize slowly.

C/Fortran interoperability supported

Checks if the C-Fortran (F77) interoperability functions are supported using the MPI-2.2 specification.

Communicator attributes

Returns all communicator attributes that are not supported. The test is run as a single process MPI job and fails if any attributes are not supported.

Compiletime constants

The MPI-3.0 specifications require that some named constants be known at compiletime. The report includes a record for each constant of this class in the form "X MPI_CONSTANT is [not] verified by METHOD" where X is either 'c' for the C compiler, or 'F' for the FORTRAN 77 compiler. For a C langauge compile, the constant is used as a case label in a switch statement. For a FORTRAN language compile, the constant is assigned to a PARAMETER. The report sumarizes with the number of constants for each compiler that was successfully verified.

Datatypes

Tests for the presence of constants from MPI-1.0 and higher. It constructs small separate main programs in either C, FORTRAN, or C++ for each datatype. It fails if any datatype is not present. ISSUE: This test may timeout if separate program executions initialize slowly.

Deprecated routines

Checks all MPI deprecated routines as of MPI-2.2, but not including routines removed by MPI-3 if this is an MPI-3 implementation.

Error Handling

Reports the default action taken on an error. It also reports if error handling can be changed to "returns", and if so, if this functions properly.

Errorcodes

The MPI-3.0 specifications require that the same constants be available for the C language and FORTRAN. The report includes a record for each errorcode of the form "X MPI_ERRCODE is [not] verified" where X is either 'c' for the C compiler, or 'F' for the FORTRAN 77 compiler. The report sumarizes with the number of errorcodes for each compiler that were successfully verified.

Extended collectives

Checks if "extended collectives" are supported, i.e., collective operations with MPI-2 intercommunicators.

Init arguments

In MPI-1.1, it is explicitly stated that an implementation is allowed to require that the arguments argc and argv passed by an application to MPI_Init in C be the same arguments passed into the application as the arguments to main. In MPI-2 implementations are not allowed to impose this requirement. Conforming implementations of MPI allow applications to pass NULL for both the argc and argv arguments of MPI_Init(). This test prints the result of the error status of MPI_Init(). If the test completes without error, it reports 'No errors.'

MPI-2 replaced routines

Checks the presence of all MPI-2.2 routines that replaced deprecated routines.

MPI-2 type routines

This test checks that a subset of MPI-2 routines that replaced MPI-1 routines work correctly.

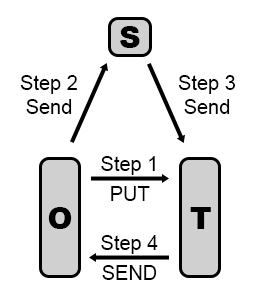

Master/slave

This test running as a single MPI process spawns four slave processes using MPI_Comm_spawn(). The master process sends and receives a message from each slave. If the test completes, it will report 'No errors.', otherwise specific error messages are listed.

One-sided communication

Checks MPI-2.2 one-sided communication modes reporting those that are not defined. If the test compiles, then "No errors" is reported, else, all undefined modes are reported as "not defined."

One-sided fences

Verifies that one-sided communication with active target synchronization with fences functions properly. If all operations succeed, one-sided communication with active target synchronization with fences is reported as supported. If one or more operations fail, the failures are reported and one-sided-communication with active target synchronization with fences is reported as NOT supported.

One-sided passiv

Verifies one-sided-communication with passive target synchronization functions properly. If all operations succeed, one-sided-communication with passive target synchronization is reported as supported. If one or more operations fail, the failures are reported and one-sided-communication with passive target synchronization with fences is reported as NOT supported.

One-sided post

Verifies one-sided-communication with active target synchronization with post/start/complete/wait functions properly. If all operations succeed, one-sided communication with active target synchronization with post/start/complete/wait is reported as supported. If one or more operations fail, the failures are reported and one-sided-communication with active target synchronization with post/start/complete/wait is reported as NOT supported.

One-sided routines

Reports if one-sided communication routines are defined. If this test compiles, one-sided communication is reported as defined, otherwise "not supported".

Thread support

Reports the level of thread support provided. This is either MPI_THREAD_SINGLE, MPI_THREAD_FUNNELED, MPI_THREAD_SERIALIZED, or MPI_THREAD_MULTIPLE.

Group Communicator

This group features tests of MPI communicator group calls.

MPI_Group_Translate_ranks perf

Measure and compare the relative performance of MPI_Group_translate_ranks with small and large group2 sizes but a constant number of ranks. This serves as a performance sanity check for the Scalasca use case where we translate to MPI_COMM_WORLD ranks. The performance should only depend on the number of ranks passed, not the size of either group (especially group2). This test is probably only meaningful for large-ish process counts.

MPI_Group_excl basic

This is a test of MPI_Group_excl().

MPI_Group_incl basic

This is a simple test of creating a group array.

MPI_Group_incl empty

This is a test to determine if an empty group can be created.

MPI_Group irregular

This is a test comparing small groups against larger groups, and use groups with irregular members (to bypass optimizations in group_translate_ranks for simple groups).

MPI_Group_translate_ranks

This is a test of MPI_Group_translate_ranks().

Win_get_group basic

This is a simple test of MPI_Win_get_group() for a selection of communicators.

Parallel Input/Output

This group features tests that involve MPI parallel input/output operations.

Asynchronous IO basic

Test asynchronous I/O with multiple completion. Each process writes to separate files and reads them back.

Asynchronous IO collective

Test asynchronous collective reading and writing. Each process asynchronously to to a file then reads it back.

Asynchronous IO contig

Test contiguous asynchronous I/O. Each process writes to separate files and reads them back. The file name is taken as a command-line argument, and the process rank is appended to it.

Asynchronous IO non-contig

Tests noncontiguous reads/writes using non-blocking I/O.

File IO error handlers

This test exercises MPI I/O and MPI error handling techniques.

MPI_File_get_type_extent

Test file_get_extent.

MPI_File_set_view displacement_current

Test set_view with DISPLACEMENT_CURRENT. This test reads a header then sets the view to every "size" int, using set view and current displacement. The file is first written using a combination of collective and ordered writes.

MPI_File_write_ordered basic

Test reading and writing ordered output.

MPI_File_write_ordered zero

Test reading and writing data with zero length. The test then looks for errors in the MPI IO routines and reports any that were found, otherwise "No errors" is reported.

MPI_Info_set file view

Test file_set_view. Access style is explicitly described as modifiable. Values include read_once, read_mostly, write_once, write_mostly, random.

MPI_Type_create_resized basic

Test file views with MPI_Type_create_resized.

MPI_Type_create_resized x2

Test file views with MPI_Type_create_resized, with a resizing of the resized type.

Datatypes

This group features tests that involve named MPI and user defined datatypes.

Aint add and diff

Tests the MPI 3.1 standard functions MPI_Aint_diff and MPI_Aint_add.

Blockindexed contiguous convert

This test converts a block indexed datatype to a contiguous datatype.

Blockindexed contiguous zero

This tests the behavior with a zero-count blockindexed datatype.

C++ datatypes

This test checks for the existence of four new C++ named predefined datatypes that should be accessible from C and Fortran.

Datatype commit-free-commit

This test creates a valid datatype, commits and frees the datatype, then repeats the process for a second datatype of the same size.

Datatype get structs

This test was motivated by the failure of an example program for RMA involving simple operations on a struct that included a struct. The observed failure was a SEGV in the MPI_Get.

Datatype inclusive typename

Sample some datatypes. See 8.4, "Naming Objects" in MPI-2. The default name is the same as the datatype name.

Datatype match size

Test of type_match_size. Check the most likely cases. Note that it is an error to free the type returned by MPI_Type_match_size. Also note that it is an error to request a size not supported by the compiler, so Type_match_size should generate an error in that case.

Datatype reference count

Test to check if freed datatypes have reference count semantics. The idea here is to create a simple but non-contiguous datatype, perform an irecv with it, free it, and then create many new datatypes. If the datatype was freed and the space was reused, this test may detect an error.

Datatypes basic and derived

This program is derived from one in the MPICH-1 test suite. It tests a wide variety of basic and derived datatypes.

Datatypes comprehensive

This program is derived from one in the MPICH-1 test suite. This test sends and receives EVERYTHING from MPI_BOTTOM, by putting the data into a structure.

Datatypes

Tests for the presence of constants from MPI-1.0 and higher. It constructs small separate main programs in either C, FORTRAN, or C++ for each datatype. It fails if any datatype is not present. ISSUE: This test may timeout if separate program executions initialize slowly.

Get_address math

This routine shows how math can be used on MPI addresses and verifies that it produces the correct result.

Get_elements contig

Uses a contig of a struct in order to satisfy two properties: (A) a type that contains more than one element type (the struct portion) (B) a type that has an odd number of ints in its "type contents" (1 in this case). This triggers a specific bug in some versions of MPICH.

Get_elements pair

Send a { double, int, double} tuple and receive as a pair of MPI_DOUBLE_INTs. this should (a) be valid, and (b) result in an element count of 3.

Get_elements partial

Receive partial datatypes and check that MPI_Getelements gives the correct version.

LONG_DOUBLE size

This test ensures that simplistic build logic/configuration did not result in a defined, yet incorrectly sized, MPI predefined datatype for long double and long double Complex. Based on a test suggested by Jim Hoekstra @ Iowa State University. The test also considers other datatypes that are optional in the MPI-3 specification.

Large counts for types

This test checks for large count functionality ("MPI_Count") mandated by MPI-3, as well as behavior of corresponding pre-MPI-3 interfaces that have better defined behavior when an "int" quantity would overflow.

Large types

This test checks that MPI can handle large datatypes.

Local pack/unpack basic

This test users MPI_Pack() on a communication buffer, then call MPU_Unpack() to confirm that the unpacked data matches the original. This routine performs all work within a simple processor.

Noncontiguous datatypes

This test uses a structure datatype that describes data that is contiguous, but is is manipulated as if it is noncontiguous. The test is designed to expose flaws in MPI memory management should they exist.

Pack/Unpack matrix transpose

This test confirms that an MPI packed matrix can be unpacked correctly by the MPI infrastructure.

Pack/Unpack multi-struct

This test confirms that packed structures, including array-of-struct and struct-of-struct unpack properly.

Pack/Unpack sliced

This test confirms that sliced array pack and unpack properly.

Pack/Unpack struct

This test confirms that a packed structure unpacks properly.

Pack basic

Tests functionality of MPI_Type_get_envelope() and MPI_Type_get_contents() on a MPI_FLOAT. Returns the number of errors encountered.

Pack_external_size

Tests functionality of MPI_Type_get_envelope() and MPI_Type_get_contents() on a packed-external MPI_FLOAT. Returns the number of errors encountered.

Pair types optional

Check for optional datatypes such as LONG_DOUBLE_INT.

Simple contig datatype

This test checks to see if we can create a simple datatype made from many contiguous copies of a single struct. The struct is built with monotone decreasing displacements to avoid any struct->config optimizations.

Simple zero contig

Tests behaviour with a zero count contig.

Struct zero count

Tests behavior with a zero-count struct of builtins.

Type_commit basic

Tests that verifies that the MPI_Type_commit succeeds.

Type_commit basic

This test builds datatypes using various components and confirms that MPI_Type_commit() succeeded.

Type_create_darray cyclic

Several cyclic checks of a custom struct darray.

Type_create_darray pack

Performs a sequence of tests building darrays with single-element blocks, running through all the various positions that the element might come from.

Type_create_darray pack many rank

Performs a sequence of tests building darrays with single-element blocks, running through all the various positions that the element might come from. Should be run with many ranks (at least 32).

Type_create_hindexed_block contents

This test is a simple check of MPI_Type_create_hindexed_block() using MPI_Type_get_envelope() and MPI_Type_get_contents().

Type_create_hindexed_block

Tests behavior with a hindexed_block that can be converted to a contig easily. This is specifically for coverage. Returns the number of errors encountered.

Type_create_resized 0 lower bound

Test of MPI datatype resized with 0 lower bound.

Type_create_resized lower bound

Test of MPI datatype resized with non-zero lower bound.

Type_create_resized

Tests behavior with resizing of a simple derived type.

Type_create_subarray basic

This test creates a subarray and confirms its contents.

Type_create_subarray pack/unpack

This test confirms that a packed sub-array can be properly unpacked.

Type_free memory

This test is used to confirm that memory is properly recovered from freed datatypes. The test may be run with valgrind or similar tools, or it may be run with MPI implementation specific options. For this test it is run only with standard MPI error checking enabled.

Type_get_envelope basic

This tests the functionality of MPI_Type_get_envelope() and MPI_Type_get_contents().

Type_hindexed zero

Tests hindexed types with all zero length blocks.

Type_hvector_blklen loop

Inspired by the Intel MPI_Type_hvector_blklen test. Added to include a test of a dataloop optimization that failed.

Type_hvector counts

Tests vector and struct type creation and commits with varying counts and odd displacements.

Type_indexed many

Tests behavior with an indexed array that can be compacted but should continue to be stored as an indexed type. Specifically for coverage. Returns the number of errors encountered. This test checks that both the upper and lower boundary of an hindexed MPI type is correct. This routine checks the alignment of a custom datatype. This test creates an MPI_Type_struct() datatype, assigns data and sends the structure to a second process. The second process receives the structure and confirms that the information contained in the structure agrees with the original data. This test is inspired by the Intel MPI_Type_vector_blklen test. The test fundamentally tries to deceive MPI into scrambling the data using padded struct types, and MPI_Pack() and MPI_Unpack(). The data is then checked to make sure the original data was not lost in the process. If "No errors" is reported, then the MPI functions that manipulated the data did not corrupt the test data. This test creates an empty packed indexed type, and then checks that the last 40 entrines of the unpacked recv_buffer have the corresponding elements from the send buffer.Type_indexed not compacted

Type_{lb,ub,extent}

Type_struct() alignment

Type_struct basic

Type_vector blklen

Zero sized blocks

Collectives

This group features tests of utilizing MPI collectives.

Allgather basic

Gather data from a vector to a contiguous vector for a selection of communicators. This is the trivial version based on the allgather test (allgatherv but with constant data sizes).

Allgather double zero

This test is similar to "Allgather in-place null", but uses MPI_DOUBLE with separate input and output arrays and performs an additional test for a zero byte gather operation.

Allgather in-place null

This is a test of MPI_Allgather() using MPI_IN_PLACE and MPI_DATATYPE_NULL to repeatedly gather data from a vector that increases in size each iteration for a selection of communicators.

Allgather intercommunicators

Allgather tests using a selection of intercommunicators and increasing array sizes. Processes are split into two groups and MPI_Allgather() is used to have each group send data to the other group and to send data from one group to the other.

Allgatherv 2D

This test uses MPI_Allgatherv() to define a two-dimensional table.

Allgatherv in-place

Gather data from a vector to a contiguous vector using MPI_IN_PLACE for a selection of communicators. This is the trivial version based on the coll/allgather tests with constant data sizes.

Allgatherv intercommunicators

Allgatherv test using a selection of intercommunicators and increasing array sizes. Processes are split into two groups and MPI_Allgatherv() is used to have each group send data to the other group and to send data from one group to the other. Similar to Allgather test (coll/icallgather).

Allgatherv large

This test is the same as Allgatherv basic (coll/coll6) except the size of the table is greater than the number of processors.

Allreduce flood

Tests the ability of the implementation to handle a flood of one-way messages by repeatedly calling MPI_Allreduce(). Test should be run with 2 processes.

Allreduce in-place

MPI_Allreduce() Test using MPI_IN_PLACE for a selection of communicators.

Allreduce intercommunicators

Allreduce test using a selection of intercommunicators and increasing array sizes.

Allreduce mat-mult

This test implements a simple matrix-matrix multiply for a selection of communicators using a user-defined operation for MPI_Allreduce(). This is an associative but not commutative operation where matSize=matrix. The number of matrices is the count argument, which is currently set to 1. The matrix is stored in C order, so that c(i,j) = cin[j+i*matSize].

Allreduce non-commutative

This tests MPI_Allreduce() using apparent non-commutative operators using a selection of communicators. This forces MPI to run code used for non-commutative operators.

Allreduce operations

This tests all possible MPI operation codes using the MPI_Allreduce() routine.

Allreduce user-defined

This example tests MPI_Allreduce() with user-defined operations using a selection of communicators similar to coll/allred3, but uses 3x3 matrices with integer-valued entries. This is an associative but not commutative operation. The number of matrices is the count argument. Tests using separate input and output matrices and using MPI_IN_PLACE. The matrix is stored in C order.

Allreduce user-defined long

Tests user-defined operation on a long value. Tests proper handling of possible pipelining in the implementation of reductions with user-defined operations.

Allreduce vector size

This tests MPI_Allreduce() using vectors with size greater than the number of processes for a selection of communicators.

Alltoall basic

Simple test for MPI_Alltoall().

Alltoall communicators

Tests MPI_Alltoall() by calling it with a selection of communicators and datatypes. Includes test using MPI_IN_PLACE.

Alltoall intercommunicators

Alltoall test using a selction of intercommunicators and increasing array sizes.

Alltoall threads

The LISTENER THREAD waits for communication from any source (including calling thread) messages which it receives that has tag REQ_TAG. Each thread enters an infinite loop that will stop only if every node in the MPI_COMM_WORLD sends a message containing -1.

Alltoallv communicators

This program tests MPI_Alltoallv() by having each processor send different amounts of data to each processor using a selection of communicators. The test uses only MPI_INT which is adequate for testing systems that use point-to-point operations. Includes test using MPI_IN_PLACE.

Alltoallv halo exchange

This tests MPI_Alltoallv() by having each processor send data to two neighbors only, using counts of 0 for the other neighbors for a selection of communicators. This idiom is sometimes used for halo exchange operations. The test uses MPI_INT which is adequate for testing systems that use point-to-point operations.

Alltoallv intercommunicators

This program tests MPI_Alltoallv using int array and a selection of intercommunicators by having each process send different amounts of data to each process. This test sends i items to process i from all processes.

Alltoallw intercommunicators

This program tests MPI_Alltoallw by having each process send different amounts of data to each process. This test is similar to the Alltoallv test (coll/icalltoallv), but with displacements in bytes rather than units of the datatype. This test sends i items to process i from all process.

Alltoallw matrix transpose

Tests MPI_Alltoallw() by performing a blocked matrix transpose operation. This more detailed example test was taken from MPI - The Complete Reference, Vol 1, p 222-224. Please refer to this reference for more details of the test.

Alltoallw matrix transpose comm

This program tests MPI_Alltoallw() by having each processor send different amounts of data to all processors. This is similar to the "Alltoallv communicators" test, but with displacements in bytes rather than units of the datatype. Currently, the test uses only MPI_INT which is adequate for testing systems that use point-to-point operations. Includes test using MPI_IN_PLACE.

Alltoallw zero types

This test makes sure that counts with non-zero-sized types on the send (recv) side match and don't cause a problem with non-zero counts and zero-sized types on the recv (send) side when using MPI_Alltoallw and MPI_Alltoallv. Includes tests using MPI_IN_PLACE.

BAND operations

Test MPI_BAND (bitwise and) operations using MPI_Reduce() on optional datatypes. Note that failing this test does not mean that there is something wrong with the MPI implementation.

BOR operations

Test MPI_BOR (bitwise or) operations using MPI_Reduce() on optional datatypes. Note that failing this test does not mean that there is something wrong with the MPI implementation.

BXOR Operations

Test MPI_BXOR (bitwise excl or) operations using MPI_Reduce() on optional datatypes. Note that failing this test does not mean that there is something wrong with the MPI implementation.

Barrier intercommunicators

This test checks that MPI_Barrier() accepts intercommunicators. It does not check for the semantics of a intercomm barrier (all processes in the local group can exit when (but not before) all processes in the remote group enter the barrier.

Bcast basic

Test broadcast with various roots, datatypes, and communicators.

Bcast intercommunicators

Broadcast test using a selection of intercommunicators and increasing array sizes.

Bcast intermediate

Test broadcast with various roots, datatypes, sizes that are not powers of two, larger message sizes, and communicators.

Bcast sizes

Tests MPI_Bcast() repeatedly using MPI_INT with a selection of data sizes.

Bcast zero types

Tests broadcast behavior with non-zero counts but zero-sized types.

Collectives array-of-struct

Tests various calls to MPI_Reduce(), MPI_Bcast(), and MPI_Allreduce() using arrays of structs.

Exscan basic

Simple test of MPI_Exscan() using single element int arrays.

Exscan communicators

Tests MPI_Exscan() using int arrays and a selection of communicators and array sizes. Includes tests using MPI_IN_PLACE.

Extended collectives

Checks if "extended collectives" are supported, i.e., collective operations with MPI-2 intercommunicators.

Gather 2D

This test uses MPI_Gather() to define a two-dimensional table.

Gather basic

This tests gathers data from a vector to contiguous datatype using doubles for a selection of communicators and array sizes. Includes test for zero length gather using MPI_IN_PLACE.

Gather communicators

This test gathers data from a vector to contiguous datatype using a double vector for a selection of communicators. Includes a zero length gather and a test to ensure aliasing is disallowed correctly.

Gather intercommunicators

Gather test using a selection of intercommunicators and increasing array sizes.

Gatherv 2D

This test uses MPI_Gatherv() to define a two-dimensional table. This test is similar to Gather test (coll/coll2).

Gatherv intercommunicators

Gatherv test using a selection of intercommunicators and increasing array sizes.

Iallreduce basic

Simple test for MPI_Iallreduce() and MPI_Allreduce().

Ibarrier

This test calls MPI_Ibarrier() followed by an conditional loop containing usleep(1000) and MPI_Test(). This test hung indefinitely under some MPI implementations.

LAND operations

Test MPI_LAND (logical and) operations using MPI_Reduce() on optional datatypes. Note that failing this test does not mean that there is something wrong with the MPI implementation.

LOR operations

Test MPI_LOR (logical or) operations using MPI_Reduce() on optional datatypes. Note that failing this test does not mean that there is something wrong with the MPI implementation.

LXOR operations

Test MPI_LXOR (logical excl or) operations using MPI_Reduce() on optional datatypes. Note that failing this test does not mean that there is something wrong with the MPI implementation.

MAXLOC operations

Test MPI_MAXLOC operations using MPI_Reduce() on optional datatypes. Note that failing this test does not mean that there is something wrong with the MPI implementation.

MAX operations

Test MPI_MAX operations using MPI_Reduce() on optional datatypes. Note that failing this test does not mean that there is something wrong with the MPI implementation.

MINLOC operations

Test MPI_MINLOC operations using MPI_Reduce() on optional datatypes. Note that failing this test does not mean that there is something wrong with the MPI implementation.

MIN operations

Test MPI_Min operations using MPI_Reduce() on optional datatypes. Note that failing this test does not mean that there is something wrong with the MPI implementation.

MScan

Tests user defined collective operations for MPI_Scan(). The operations are inoutvec[i] += invec[i] op inoutvec[i] and inoutvec[i] = invec[i] op inoutvec[i] (see MPI-1.3 Message-Passing Interface section 4.9.4). The order of operation is important. Note that the computation is in process rank (in the communicator) order independant of the root process.

Non-blocking basic

This is a weak sanity test that all non-blocking collectives specified by MPI-3 are present in the library and accept arguments as expected. This test does not check for progress, matching issues, or sensible output buffer values.

Non-blocking intracommunicator

This is a basic test of all 17 non-blocking collective operations specified by the MPI-3 standard. It only exercises the intracommunicator functionality, does not use MPI_IN_PLACE, and only transmits/receives simple integer types with relatively small counts. It does check a few fancier issues, such as ensuring that "premature user releases" of MPI_Op and MPI_Datatype objects does not result in a segfault.

Non-blocking overlapping

This test attempts to execute multiple simultaneous non-blocking collective (NBC) MPI routines at the same time, and manages their completion with a variety of routines (MPI_{Wait,Test}{,_all,_any,_some}). The test also exercises a few point-to-point operations.

Non-blocking wait

This is a weak sanity test that all non-blocking collectives specified by MPI-3 are present in the library and take arguments as expected. Includes calls to MPI_Wait() immediately following non-blocking collective calls. This test does not check for progress, matching issues, or sensible output buffer values.

Op_{create,commute,free}

A simple test of MPI_Op_Create/Commutative/free on predefined reduction operations and both commutative and non-commutative user defined operations.

PROD operations

Test MPI_PROD operations using MPI_Reduce() on optional datatypes. Note that failing this test does not mean that there is something wrong with the MPI implementation.

Reduce/Bcast multi-operation

This test repeats pairs of calls to MPI_Reduce() and MPI_Bcast() using different reduction operations and checks for errors.

Reduce/Bcast user-defined

Tests user defined collective operations for MPI_Reduce() followed by MPI_Broadcast(). The operation is inoutvec[i] = invec[i] op inoutvec[i] (see MPI-1.3 Message-Passing Interface section 4.9.4). The order of operation is important. Note that the computation is in process rank (in the communicator) order independant of the root process.

Reduce/Bcast user-defined

This test calls MPI_Reduce() and MPI_Bcast() with a user defined operation.

Reduce_Scatter_block large data

Test of reduce scatter block with large data (needed in MPICH to trigger the long-data algorithm). Each processor contributes its rank + the index to the reduction, then receives the ith sum. Can be called with any number of processors.

Reduce_Scatter intercomm. large

Test of reduce scatter block with large data on a selection of intercommunicators (needed in MPICH to trigger the long-data algorithm). Each processor contributes its rank + the index to the reduction, then receives the ith sum. Can be called with any number of processors.

Reduce_Scatter large data

Test of reduce scatter with large data (needed to trigger the long-data algorithm). Each processor contributes its rank + index to the reduction, then receives the "ith" sum. Can be run with any number of processors.

Reduce_Scatter user-defined

Test of reduce scatter using user-defined operations. Checks that the non-communcative operations are not commuted and that all of the operations are performed.

Reduce any-root user-defined

This tests implements a simple matrix-matrix multiply with an arbitrary root using MPI_Reduce() on user-defined operations for a selection of communicators. This is an associative but not commutative operation. For a matrix size of matsize, the matrix is stored in C order where c(i,j) is cin[j+i*matSize].

Reduce basic

A simple test of MPI_Reduce() with the rank of the root process shifted through each possible value using a selection of communicators.

Reduce communicators user-defined

This tests implements a simple matrix-matrix multiply using MPI_Reduce() on user-defined operations for a selection of communicators. This is an associative but not commutative operation. For a matrix size of matsize, the matrix is stored in C order where c(i,j) is cin[j+i*matSize].

Reduce intercommunicators

Reduce test using a selection of intercommunicators and increasing array sizes.

Reduce_local basic

A simple test of MPI_Reduce_local(). Uses MPI_SUM as well as user defined operators on arrays of increasing size.

Reduce_scatter basic

Test of reduce scatter. Each processor contribues its rank plus the index to the reduction, then receives the ith sum. Can be called with any number of processors.

Reduce_scatter_block basic

Test of reduce scatter block. Each process contributes its rank plus the index to the reduction, then receives the ith sum. Can be called with any number of processors.

Reduce_scatter_block user-def

Test of reduce scatter block using user-defined operations to check that non-commutative operations are not commuted and that all operations are performed. Can be called with any number of processors.

Reduce_scatter intercommunicators

Test of reduce scatter with large data on a selection of intercommunicators (needed in MPICH to trigger the long-data algorithm). Each processor contributes its rank + the index to the reduction, then receives the ith sum. Can be called with any number of processors.

SUM operations

This test looks at integer or integer related datatypes not required by the MPI-3.0 standard (e.g. long long) using MPI_Reduce(). Note that failure to support these datatypes is not an indication of a non-compliant MPI implementation.

Scan basic

A simple test of MPI_Scan() on predefined operations and user-defined operations with with inoutvec[i] = invec[i] op inoutvec[i] (see 4.9.4 of the MPI standard 1.3) and inoutvec[i] += invec[i] op inoutvec[i]. The order is important. Note that the computation is in process rank (in the communicator) order, independent of the root.

Scatter 2D

This test uses MPI_Scatter() to define a two-dimensional table. See also Gather test (coll/coll2) and Gatherv test (coll/coll3) for similar tests.

Scatter basic

This MPI_Scatter() test sends a vector and receives individual elements, except for the root process that does not receive any data.

Scatter contiguous

This MPI_Scatter() test sends contiguous data and receives a vector on some nodes and contiguous data on others. There is some evidence that some MPI implementations do not check recvcount on the root process. This test checks for that case.

Scatter intercommunicators

Scatter test using a selection of intercommunicators and increasing array sizes.

Scatterv 2D

This test uses MPI_Scatterv() to define a two-dimensional table.

Scatter vector-to-1

This MPI_Scatter() test sends a vector and receives individual elements.

Scatterv intercommunicators

Scatterv test using a selection of intercommunicators and increasing array sizes.

Scatterv matrix

This is an example of using scatterv to send a matrix from one process to all others, with the matrix stored in Fortran order. Note the use of an explicit upper bound (UB) to enable the sources to overlap. This tests uses scatterv to make sure that it uses the datatype size and extent correctly. It requires the number of processors used in the call to MPI_Dims_create.

User-defined many elements

Test user-defined operations for MPI_Reduce() with a large number of elements. Added because a talk at EuroMPI'12 claimed that these failed with more than 64k elements.

MPI_Info Objects

The info tests emphasize the MPI Info object functionality.

MPI_Info_delete basic

This test exercises the MPI_Info_delete() function.

MPI_Info_dup basic

This test exercises the MPI_Info_dup() function.

MPI_Info_get basic

This is a simple test of the MPI_Info_get() function.

MPI_Info_get ext. ins/del

Test of info that makes use of the extended handles, including inserts and deletes.

MPI_Info_get extended

Test of info that makes use of the extended handles.

MPI_Info_get ordered

This is a simple test that illustrates how named keys are ordered.

MPI_Info_get_valuelen basic

Simple info set and get_valuelen test.

MPI_Info_set/get basic

Simple info set and get test.

Dynamic Process Management

This group features tests that add processes to a running communicator, joining separately started applications, then handling faults/failures.

Creation group intercomm test

In this test processes create an intracommunicator, and creation is collective only on the members of the new communicator, not on the parent communicator. This is accomplished by building up and merging intercommunicators starting with MPI_COMM_SELF for each process involved.

MPI_Comm_accept basic

This tests exercises MPI_Open_port(), MPI_Comm_accept(), and MPI_Comm_disconnect().

MPI_Comm_connect 2 processes

This test checks to make sure that two MPI_Comm_connects to two different MPI ports match their corresponding MPI_Comm_accepts.

MPI_Comm_connect 3 processes

This test checks to make sure that three MPI_Comm_connections to three different MPI ports match their corresponding MPI_Comm_accepts.

MPI_Comm_disconnect basic

A simple test of Comm_disconnect with a master and 2 spawned ranks.

MPI_Comm_disconnect-reconnect basic

A simple test of Comm_connect/accept/disconnect.

MPI_Comm_disconnect-reconnect groups

This test tests the disconnect code for processes that span process groups. This test spawns a group of processes and then merges them into a single communicator. Then the single communicator is split into two communicators, one containing the even ranks and the other the odd ranks. Then the two new communicators do MPI_Comm_accept/connect/disconnect calls in a loop. The even group does the accepting while the odd group does the connecting.

MPI_Comm_disconnect-reconnect repeat

This test spawns two child jobs and has them open a port and connect to each other. The two children repeatedly connect, accept, and disconnect from each other.

MPI_Comm_disconnect send0-1

A test of Comm_disconnect with a master and 2 spawned ranks, after sending from rank 0 to 1.

MPI_Comm_disconnect send1-2

A test of Comm_disconnect with a master and 2 spawned ranks, after sending from rank 1 to 2.

MPI_Comm_join basic

A simple test of Comm_join.

MPI_Comm_spawn basic

A simple test of Comm_spawn.

MPI_Comm_spawn complex args

A simple test of Comm_spawn, with complex arguments.

MPI_Comm_spawn inter-merge

A simple test of Comm_spawn, followed by intercomm merge.

MPI_Comm_spawn many args

A simple test of Comm_spawn, with many arguments.

MPI_Comm_spawn_multiple appnum

This tests spawn_mult by using the same executable and no command-line options. The attribute MPI_APPNUM is used to determine which executable is running.

MPI_Comm_spawn_multiple basic

A simple test of Comm_spawn_multiple with info.

MPI_Comm_spawn repeat

A simple test of Comm_spawn, called twice.

MPI_Comm_spawn with info

A simple test of Comm_spawn with info.

MPI_Intercomm_create

Use Spawn to create an intercomm, then create a new intercomm that includes processes not in the initial spawn intercomm.This test ensures that spawned processes are able to communicate with processes that were not in the communicator from which they were spawned.

MPI_Publish_name basic

This test confirms the functionality of MPI_Open_port() and MPI_Publish_name().

MPI spawn-connect-accept send/recv

Spawns two processes, one connecting and one accepting. It synchronizes with each then waits for them to connect and accept. The connector and acceptor respectively send and receive some data.

MPI spawn-connect-accept

Spawns two processes, one connecting and one accepting. It synchronizes with each then waits for them to connect and accept.

MPI spawn test with threads

Create a thread for each task. Each thread will spawn a child process to perform its task.

Multispawn

This (is a placeholder for a) test that creates 4 threads, each of which does a concurrent spawn of 4 more processes, for a total of 17 MPI processes. The resulting intercomms are tested for consistency (to ensure that the spawns didn't get confused among the threads). As an option, it will time the Spawn calls. If the spawn calls block the calling thread, this may show up in the timing of the calls.

Process group creation

In this test, processes create an intracommunicator, and creation is collective only on the members of the new communicator, not on the parent communicator. This is accomplished by building up and merging intercommunicators using Connect/Accept to merge with a master/controller process.

Taskmaster threaded

This is a simple test that creates threads to verifiy compatibility between MPI and the pthread library.

Threads

This group features tests that utilize thread compliant MPI implementations. This includes the threaded environment provided by MPI-3.0, as well as POSIX compliant threaded libraries such as PThreads.

Alltoall threads

The LISTENER THREAD waits for communication from any source (including calling thread) messages which it receives that has tag REQ_TAG. Each thread enters an infinite loop that will stop only if every node in the MPI_COMM_WORLD sends a message containing -1.

MPI_T multithreaded

This test is adapted from test/mpi/mpi_t/mpit_vars.c. But this is a multithreading version in which multiple threads will call MPI_T routines.

With verbose set, thread 0 will prints out MPI_T control variables, performance variables and their categories.

Multiple threads context dup

This test creates communicators concurrently in different threads.

Multiple threads context idup

This test creates communicators concurrently, non-blocking, in different threads.

Multiple threads dup leak

This test repeatedly duplicates and frees communicators with multiple threads concurrently to stress the multithreaded aspects of the context ID allocation code. Thanks to IBM for providing the original version of this test.

Multispawn

This (is a placeholder for a) test that creates 4 threads, each of which does a concurrent spawn of 4 more processes, for a total of 17 MPI processes. The resulting intercomms are tested for consistency (to ensure that the spawns didn't get confused among the threads). As an option, it will time the Spawn calls. If the spawn calls block the calling thread, this may show up in the timing of the calls.

Multi-target basic

Run concurrent sends to a single target process. Stresses an implementation that permits concurrent sends to different targets.

Multi-target many

Run concurrent sends to different target processes. Stresses an implementation that permits concurrent sends to different targets.

Multi-target non-blocking

Run concurrent sends to different target processes. Stresses an implementation that permits concurrent sends to different targets. Uses non-blocking sends, and have a single thread complete all I/O.

Multi-target non-blocking send/recv

Run concurrent sends to different target processes. Stresses an implementation that permits concurrent sends to different targets. Uses non-blocking sends and recvs, and have a single thread complete all I/O.

Multi-target self

Send to self in a threaded program.

Multi-threaded [non]blocking

The tests blocking and non-blocking capability within MPI.

Multi-threaded send/recv

The buffer size needs to be large enough to cause the rndv protocol to be used. If the MPI provider doesn't use a rndv protocol then the size doesn't matter.

Simple thread comm dup

This is a simple test of threads in MPI with communicator duplication.

Simple thread comm idup

This is a simple test of threads in MPI with non-blocking communicator duplication.

Simple thread finalize

The test here is a simple one that Finalize exits, so the only action is to write no error.

Simple thread initialize

The test initializes a thread, then calls MPI_Finalize() and prints "No errors".

Taskmaster threaded

This is a simple test that creates threads to verifiy compatibility between MPI and the pthread library.

Thread Group creation

Every thread paticipates in a distinct MPI_Comm_create group, distinguished by its thread-id (used as the tag). Threads on even ranks join an even comm and threads on odd ranks join the odd comm.

Thread/RMA interaction

This is a simple test of threads in MPI.

Threaded group

In this test a number of threads are created with a distinct MPI communicator (or comm) group distinguished by its thread-id (used as a tag). Threads on even ranks join an even comm and threads on odd ranks join the odd comm.

Threaded ibsend

This program performs a short test of MPI_BSEND in a multithreaded environment. It starts a single receiver thread that expects NUMSENDS messages and NUMSENDS sender threads, that use MPI_Bsend to send a message of size MSGSIZE to its right neigbour or rank 0 if (my_rank==comm_size-1), i.e. target_rank = (my_rank+1)%size.

After all messages have been received, the receiver thread prints a message, the threads are joined into the main thread and the application terminates.

Threaded request

Threaded generalized request tests.

Threaded wait/test

Threaded wait/test request tests.

MPI-Toolkit Interface

This group features tests that involve the MPI Tool interface available in MPI-3.0 and higher.

MPI_T 3.1 get index call

Tests that the MPI 3.1 Toolkit interface *_get_index name lookup functions work as expected.

MPI_T cycle variables

To print out all MPI_T control variables, performance variables and their categories in the MPI implementation.

MPI_T multithreaded

This test is adapted from test/mpi/mpi_t/mpit_vars.c. But this is a multithreading version in which multiple threads will call MPI_T routines.

With verbose set, thread 0 will prints out MPI_T control variables, performance variables and their categories.

MPI_T string handling

A test that MPI_T string handling is working as expected.

MPI_T write variable

This test writes to control variables exposed by MPI_T functionality of MPI_3.0.

MPI-3.0

This group features tests that exercises MPI-3.0 and higher functionality. Note that the test suite was designed to be compiled and executed under all versions of MPI. If the current version of MPI the test suite is less that MPI-3.0, the executed code will report "MPI-3.0 or higher required" and will exit.

Aint add and diff

Tests the MPI 3.1 standard functions MPI_Aint_diff and MPI_Aint_add.

C++ datatypes

This test checks for the existence of four new C++ named predefined datatypes that should be accessible from C and Fortran.

Comm_create_group excl 4 rank

This test using 4 processes creates a group with the even processes using MPI_Group_excl() and uses this group to create a communicator. Then both the communicator and group are freed.

Comm_create_group excl 8 rank

This test using 8 processes creates a group with the even processes using MPI_Group_excl() and uses this group to create a communicator. Then both the communicator and group are freed.

Comm_create_group incl 2 rank

This test using 2 processes creates a group with ranks less than size/2 using MPI_Group_range_incl() and uses this group to create a communicator. Then both the communicator and group are freed.

Comm_create_group incl 4 rank

This test using 4 processes creates a group with ranks less than size/2 using MPI_Group_range_incl() and uses this group to create a communicator. Then both the communicator and group are freed.

Comm_create_group incl 8 rank

This test using 8 processes creates a group with ranks less than size/2 using MPI_Group_range_incl() and uses this group to create a communicator. Then both the communicator and group are freed.

Comm_create_group random 2 rank

This test using 2 processes creates and frees groups by randomly adding processes to a group, then creating a communicator with the group.

Comm_create_group random 4 rank

This test using 4 processes creates and frees groups by randomly adding processes to a group, then creating a communicator with the group.

Comm_create_group random 8 rank

This test using 8 processes creates and frees groups by randomly adding processes to a group, then creating a communicator with the group.

Comm_idup 2 rank

Multiple tests using 2 processes that make rank 0 wait in a blocking receive until all other processes have called MPI_Comm_idup(), then call idup afterwards. Should ensure that idup doesn't deadlock. Includes a test using an intercommunicator.]

Comm_idup 4 rank

Multiple tests using 4 processes that make rank 0 wait in a blocking receive until all other processes have called MPI_Comm_idup(), then call idup afterwards. Should ensure that idup doesn't deadlock. Includes a test using an intercommunicator.

Comm_idup 9 rank

Multiple tests using 9 processes that make rank 0 wait in a blocking receive until all other processes have called MPI_Comm_idup(), then call idup afterwards. Should ensure that idup doesn't deadlock. Includes a test using an intercommunicator.]

Comm_idup multi

Simple test creating multiple communicators with MPI_Comm_idup.

Comm_idup overlap

Each pair of processes uses MPI_Comm_idup() to dup the communicator such that the dups are overlapping. If this were done with MPI_Comm_dup() this should deadlock.

Comm_split_type basic

Tests MPI_Comm_split_type() including a test using MPI_UNDEFINED.

Comm_with_info dup 2 rank

This test exercises MPI_Comm_dup_with_info() with 2 processes by setting the info for a communicator, duplicating it, and then testing the communicator.

Comm_with_info dup 4 rank

This test exercises MPI_Comm_dup_with_info() with 4 processes by setting the info for a communicator, duplicating it, and then testing the communicator.

Comm_with_info dup 9 rank

This test exercises MPI_Comm_dup_with_info() with 9 processes by setting the info for a communicator, duplicating it, and then testing the communicator.

Compare_and_swap contention

Tests MPI_Compare_and_swap using self communication, neighbor communication, and communication with the root causing contention.

Datatype get structs

This test was motivated by the failure of an example program for RMA involving simple operations on a struct that included a struct. The observed failure was a SEGV in the MPI_Get.

Fetch_and_op basic

This simple set of tests executes the MPI_Fetch_and op() calls on RMA windows using a selection of datatypes with multiple different communicators, communication patterns, and operations.

Get_acculumate basic

Get Accumulated Test. This is a simple test of MPI_Get_accumulate() on a local window.

Get_accumulate communicators

Get Accumulate Test. This simple set of tests executes MPI_Get_accumulate on RMA windows using a selection of datatypes with multiple different communicators, communication patterns, and operations.

Iallreduce basic

Simple test for MPI_Iallreduce() and MPI_Allreduce().

Ibarrier

This test calls MPI_Ibarrier() followed by an conditional loop containing usleep(1000) and MPI_Test(). This test hung indefinitely under some MPI implementations.

Large counts for types

This test checks for large count functionality ("MPI_Count") mandated by MPI-3, as well as behavior of corresponding pre-MPI-3 interfaces that have better defined behavior when an "int" quantity would overflow.

Large types

This test checks that MPI can handle large datatypes.

Linked list construction fetch/op

This test constructs a distributed shared linked list using MPI-3 dynamic windows with MPI_Fetch_and_op. Initially process 0 creates the head of the list, attaches it to an RMA window, and broadcasts the pointer to all processes. All processes then concurrently append N new elements to the list. When a process attempts to attach its element to the tail of list it may discover that its tail pointer is stale and it must chase ahead to the new tail before the element can be attached.

Linked_list construction

Construct a distributed shared linked list using MPI-3 dynamic windows. Initially process 0 creates the head of the list, attaches it to the window, and broadcasts the pointer to all processes. Each process "p" then appends N new elements to the list when the tail reaches process "p-1".

Linked list construction lockall

Construct a distributed shared linked list using MPI-3 dynamic RMA windows. Initially process 0 creates the head of the list, attaches it to the window, and broadcasts the pointer to all processes. All processes then concurrently append N new elements to the list. When a process attempts to attach its element to the tail of list it may discover that its tail pointer is stale and it must chase ahead to the new tail before the element can be attached. This version of the test suite uses MPI_Win_lock_all() instead of MPI_Win_lock(MPI_LOCK_EXCLUSIVE, ...).

Linked_list construction lock excl

MPI-3 distributed linked list construction example. Construct a distributed shared linked list using proposed MPI-3 dynamic windows. Initially process 0 creates the head of the list, attaches it to the window, and broadcasts the pointer to all processes. Each process "p" then appends N new elements to the list when the tail reaches process "p-1". The test uses the MPI_LOCK_EXCLUSIVE argument with MPI_Win_lock().

Linked-list construction lock shr

This test constructs a distributed shared linked list using MPI-3 dynamic windows. Initially process 0 creates the head of the list, attaches it to the window, and broadcasts the pointer to all processes. Each process "p" then appends N new elements to the list when the tail reaches process "p-1". This test is similar to Linked_list construction test 2 (rma/linked_list_bench_lock_excl) but uses an MPI_LOCK_SHARED parameter to MPI_Win_Lock().

Linked_list construction put/get